For too long, the industry has operated under the assumption that if you aren’t writing Python, you’re sitting on the sidelines of the Generative AI revolution. But as cloud API bills skyrocket and data privacy becomes a non-negotiable architectural requirement, the “vibe” is shifting. Moving from a simple prompt to a production-ready, data-aware application requires more than just a script; it requires robust orchestration and data hygiene.

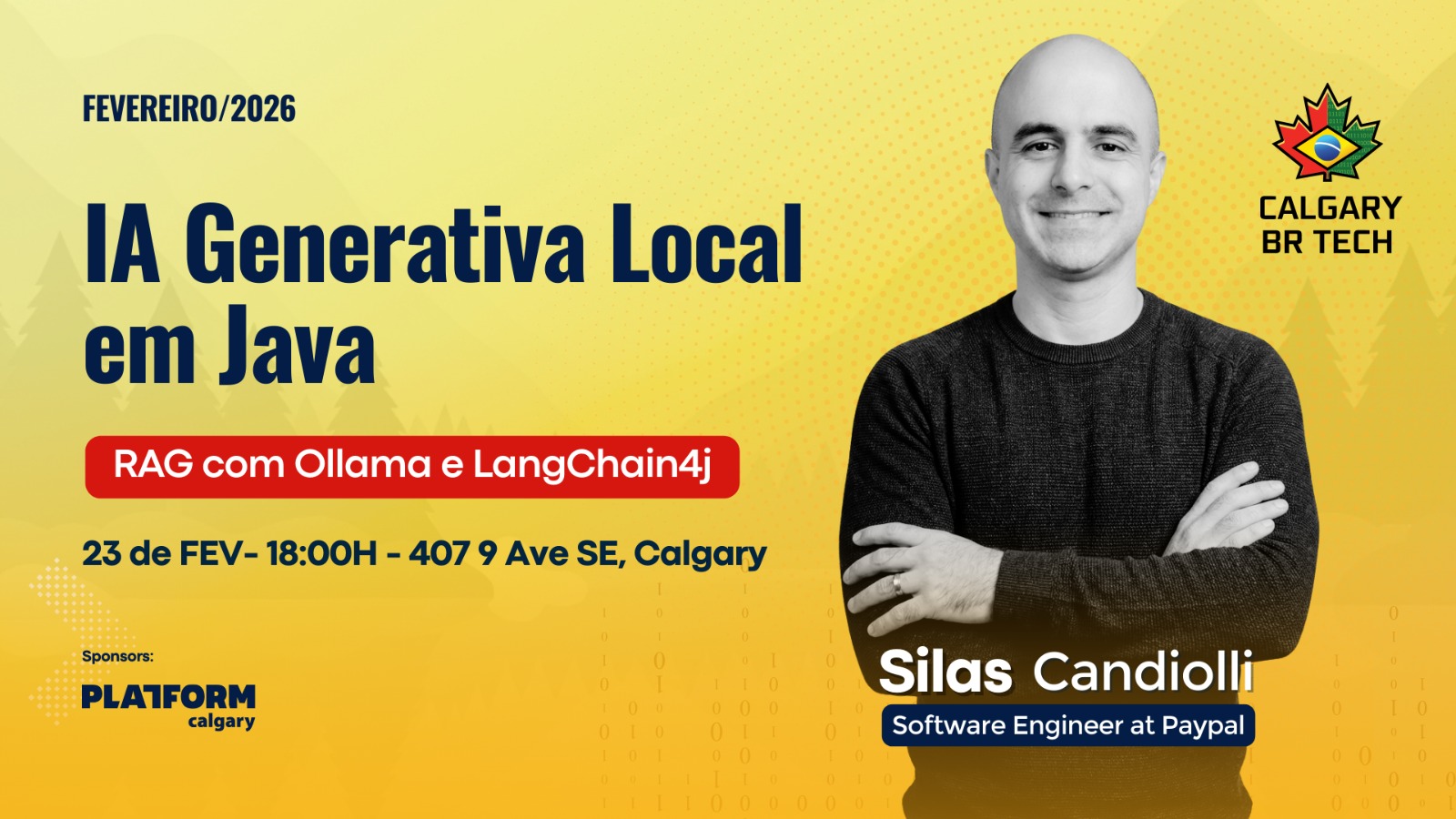

At the February Calgary BR Tech event, Silas Candiolli – a Senior Software Engineer at PayPal with 16 years of Java expertise – demonstrated that the Java ecosystem is not just participating in this shift, but leading it. By leveraging local Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG), Silas proved that “legacy” developers are the new “Software Integrators.”

Here are five surprising lessons from his deep dive into local AI development.

1. The End of “Pay-per-Token” Anxiety

The traditional path to AI development involves cloud providers like Gemini or GPT-4. However, every request carries a financial cost, creating a friction point during the iterative Proof of Concept (POC) phase. Ollama transforms this cycle by allowing models to run entirely on a developer’s local machine.

“If it’s up to me to create, to consult my API in a Gemini, and pay for each request, or for token… these things start to get a little more complicated for me to do POCs and tests that are of my interest. That’s why Ollama facilitates a lot for those who want to do tests and discover what it’s like to use it.” — Silas Candiolli

By removing the “pay-per-token” anxiety, developers gain the freedom to fail fast and experiment frequently, democratizing AI experimentation for individual engineers and small teams without a dedicated R&D budget.

2. Java’s Modern AI Stack: Beyond the “Legacy” Label

Java developers are often characterized as late adopters, but Silas’s demonstration showed a bleeding-edge stack that rivals any Python environment. The combination of Quarkus and LangChain4j allows developers to integrate AI as easily as adding a few Maven dependencies.

In his live demonstration, Silas utilized a high-performance stack optimized for modern backend engineering:

- Java 24/25: Staying on the latest releases to leverage modern runtime performance.

- Quarkus: A Kubernetes-native framework for building lean, fast microservices.

- LangChain4j: The primary orchestrator that bridges Java applications with LLMs, serving as the Java-centric alternative to Python’s LangChain.

- Mistral: Specifically chosen as the local model because it balances performance with hardware constraints (Silas noted his machine was limited to 16GB of RAM).

- PGVector: A Postgres extension that transforms a standard database into a “vector store,” acting as the long-term memory for similarity searches.

3. Your Model is Only as Smart as Your Data Engineering

A common trap for developers is blaming “hallucinations” on the model’s lack of intelligence. Silas’s demo provided a stark reality check: RAG is more of a data engineering problem than an AI problem.

During the live bookstore chatbot demo, the model failed to find a “Golang” book and gave nonsensical answers about Python books. The culprit wasn’t the Mistral model; it was the “dirty” source data. In one relatable example, Silas found that his “Data Engineering with Python” book had been incorrectly tagged with “Java” and “OOP” in the CSV. Because the vector store was populated with bad embeddings, the model was essentially hallucinating based on the developer’s own data errors. The lesson is clear: populating the vector store requires rigorous data hygiene before the first prompt is ever sent.

4. Moving from “Vibe-Based” Testing to Scientific Metrics

In AI development, “it feels like it’s working” is a dangerous metric. To build production-grade systems, Silas advocated for moving toward RAG Evaluation (Ragas) and BLEU scores to objectively measure performance.

By quantifying the relationship between the user’s question, the retrieved context, and the final answer, developers can pinpoint exactly where the system is failing.

| Metric | Significance | Ideal Range |

| Context Relevance | Did the system retrieve the correct documents for the query? | 0.8+ |

| Faithfulness | Did the model answer using only the provided context? (Prevents hallucination). | 0.9 – 1.0 |

| Answer Relevance | Does the response actually address the user’s specific problem? | 0.8+ |

| BLEU Score | Measures how close the AI’s response is to a “reference” human answer. | 0.7 – 0.9 |

| Semantic Similarity | Uses embeddings to check if the meaning of the answer matches the intent. | 0.8+ |

Silas emphasized that these metrics allow for automated quality gates: if a response falls below a 0.5 faithfulness score, the system can automatically reject it rather than serving a hallucination to the client.

5. The Production Reality Check: The Power of Abstraction

While local models like Mistral via Ollama are perfect for development and privacy, they face hardware limitations. On a standard 16GB RAM machine, complex queries can take minutes to process.

The strategy for production isn’t necessarily to run Ollama on a server; it’s to use LangChain4j as an abstraction layer. Because LangChain4j is model-agnostic, you can develop, test, and validate your RAG logic locally using Ollama to keep costs at zero. When you are ready for production-level scale and speed, you can swap the local model for a cloud-hosted API (like Gemini or OpenAI) with minimal code changes. This flexibility ensures you are never locked into a single provider or hardware configuration.

Conclusion: The New Frontier of the “Software Integrator”

The role of the developer is evolving. Silas Candiolli doesn’t view himself as an “AI Specialist,” but as a “Java Specialist” who knows how to integrate AI. The future doesn’t belong to those who can build a model from scratch, but to the “Software Integrators”—engineers who can take existing systems, clean up legacy data, and orchestrate local AI tools to build context-aware applications.

With a few Maven dependencies and a local runner, the power of Generative AI is now a standard part of the Java developer’s toolkit.

If you could run a fully capable AI on your private data without a single byte leaving your server, what would you build first?

Comments are closed